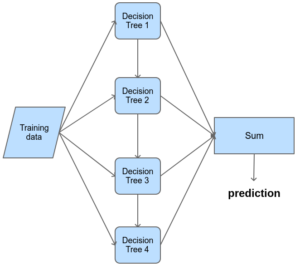

In this article, we have learned that Random forest makes a strong learner from many weak learners. Gives High Accuracy as we are not going with one result of a Decision Tree and we are taking the best out of all results.Overcomes Overfitting problem of Decision Trees.However, due to the majority voting, those noisy data may be neglected. There may be a few Decision Trees that has noisy data. Instead of building a single decision tree, Random forest builds a number of DT’s with a different set of observations. Random Forestįrom the above picture, 4 Decision Trees gives results and the result which wins the majority voting is set as the final prediction value.Īs all the datasets will not have the same data, the noisy data will not be existing in all the decision trees. The main difference between these two is that Random Forest is a bagging method that uses a subset of the original dataset to make predictions and this property of Random Forest helps to overcome Overfitting.

So each Decision Tree acts as a separate Model. Using the above 4 samples, a Decision Tree is modelled for each sample dataset. N number of Weak learners make a Strong learner.Īfter bagging, we have n number of independent datasets with the same number of data. However, each dataset is unique because there is at least 1 data point that differs from other datasets. There is no restriction in number of datasets that sampled from population.We can say that we copy data points from main population randomly and creating m number of datasets. We did not place any condition when creating datasets, as a point given to one dataset will not be removed from the main dataset. That’s why we say it as “with replacement”. A data point existing in one dataset may or may not be existing in other datasets.In the above picture, you can see that, from a dataset of size 26, 4 data sets have been drawn. But when the test data comes in play, the training received from noise makes the model to fail the prediction.īut we can say that the entire data is not a noise. Overfitting happens when our model learns too much with the training data, that means it learns from the noises also from the training data. Random Forest gives us a solution to overcome this problem. The main problem we have in Decision Tree is, it is “Prone to Overfitting”.Įven though we can control it using parameters, it is a noticeable problem in DT. A solution to Overfitting with the help of Bootstrap Aggregating/Bagging: To know about decision tree, please check Decision Tree. In this article, we will see this in detail with an example.

While predicting, it gets answers from all the trees and takes the majority results as the final prediction. Random Forest bags the data with replacement and creates a Decision Tree from each data.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed